Is IMDb Thinking Of Switching To A 5-Star Rating System, Or Prioritizing Critic Ratings?

Could the Internet Movie Database be considering a switch to a 5-star rating system, down from the 10-point user rating system they have employed for the last 25 years? IMDb users have recently been asked to take a survey which suggests the movie database is considering changes to the way they present opinionated information on the site. The study even indicates that they are considering expanding the opinions of film critics on the site. Could the IMDb rating system be in for a dramatic change?

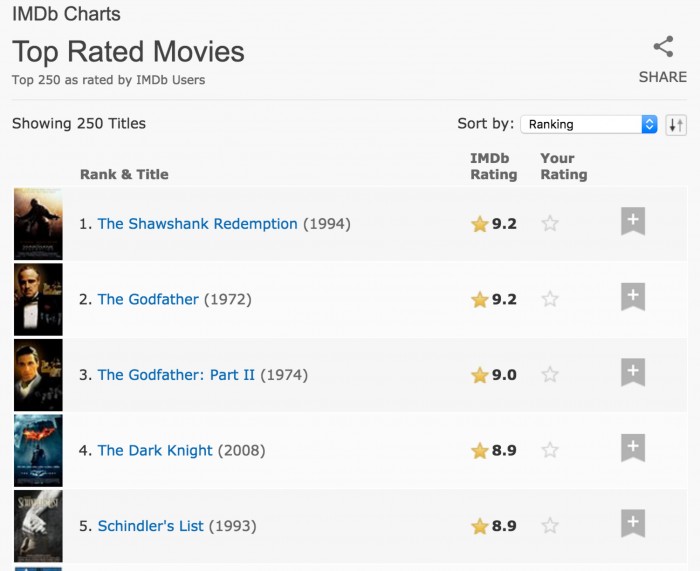

Among the many questions in the survey, IMDb asked visitors if they preferred a five-star rating system or the 10-point rating system currently on the site. They asked users to pinpoint how many stars or points determine if a movie is considered "good" on both of the scales.

Say what you will about the IMDb user ratings, but as someone who grew up using the site on a daily basis, I have found it to be highly valuable for film discoveries and suggestions. Nowadays, a squad (pun intended) of fanboys can quickly inflate a movie's user rating, especially around the weeks of release. This certainly makes that data harder to trust in the early months. But IMDb is the largest sample of moviegoer opinions available anywhere, and as that sample grows, like any poll, the data tends to become more representative of the mass audiences.

I think these numbers can be fascinating, and still look at them to this day. Even in a world where we have apps like Letterboxd, I still find myself looking at the ratings from the IMDb crowd because, like I said, there is no larger sample anywhere. Does The Dark Knight deserve to the the #4 best film of all time? Probably not. But it's interesting that users of the service have rated the film so highly. (It's worth noting that the film was ranked #33 best film of the last century according to critics.)

One of the things I hate about Letterboxd is they force me to use the five-star rating. I have grown up on a 10-point scale with half-point increments and have always found the five-star system to be too restrictive. Sure, you can move the meter by half a star, making it virtually a 10-point scale, but it just doesn't compare to the 20 possible configurations offered by the 10-point system. I'm sure many of you are even questioning if any rating system is necessary. Why reduce your opinion of a movie down to a single number? This is an argument for a different place and a different time.

I can understand why IMDb might be considering switching to a five-star rating system as it's less for new users to think about. Netflix and other popular movie rating systems use the five-star system. And for use on a movie-by-movie basis, it's not a bad way to go. Where it gets restrictive is when you want to compare a rating for a movie versus a rating you gave another movie. Or perhaps look at a descending ranking of your grade history (or the average rating history of all users, i.e. the IMDb top 250). The larger the input variable, the more accurate and less general you can (and tend) to be.

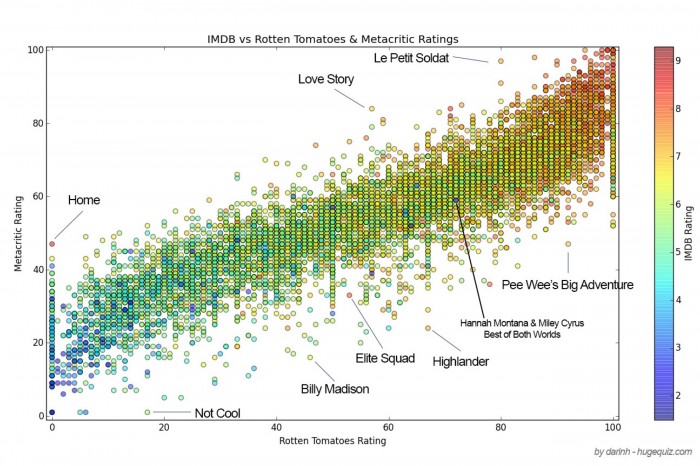

IMDb also questioned if visitors preferred movie critic opinions over those of average users. It's possible that IMDb is looking to see if film critic opinions even matter anymore to mainstream audiences. Could IMDb be considering demoting critical ratings from the site? I don't think so. The same survey asked visitors which service was preferred: Rotten Tomatoes or Metacritic.

Both of those services round up movie reviews and present an average score to represent the critical consensus opinion of any given film. Rotten Tomatoes' Tomatometer is based on whether a critic liked a movie or not, with the percentage representing not the average critic score but the percentage of film critics who "liked" a movie. On the other hand, Metacritic presents an average of all the film critic scores, but with a much smaller and more restrictive sampling of film critics.

Source: IMDB (via OMDB API), Rotten Tomatoes – all movies with a rating in all three were used, approx. 8600 total.

Both services seem to have their advantages and disadvantages. With Rotten Tomatoes, for example, 100 film critics could have rated one movie a "7" out of 10 and have 100% fresh on the Tomatometer while another film could have had 90 critics rate a movie a "10" while ten reviewers rate the movie a "6", and that film would get a 90% Tomatometer rating.

My big problem with Metacritic is that I find the sampling of critics in their service to be much too small. For example, Blair Witch is based on only 44 reviews while Rotten Tomatoes counts 155 reviews for the same movie. I always believe that a larger sample is for the better, meaning there is less of a chance of one or two or three contrarians really messing with the overall data.

IMDb currently features Metacritic ratings on their pages, but the score is given lower and less prominent placement compared to the IMDb user rating that appears on the top of the page, next to the film title.

CBS Interactive owns Metacritic, while Fandango Media owns Rotten Tomatoes, under the umbrellas of Warner Bros/Time Warner and Comcast/NBCUniversal. Amazon, of course, owns IMDb. While they appear to be currently licensing the Metacritic data, there is nothing to stop IMDb from switching to Rotten Tomatoes. I don't think that IMDb would deprioritize their own users' ratings as its proprietary data and it's a system that brings many people to their site.

While nothing is set in stone, I believe that the survey raises some interesting questions. Do you prefer a ten-point rating system or a five-star rating system? Should IMDb prioritize a film critic rating system like Rotten Tomatoes or Metacritic over their own user ratings? Leave your thoughts in the comments below.

Please note: The survey no longer seems to appear on the IMDb site.